Shiga University, Faculty of Data Science

Computer Vision Laboratory

News & Topics

- 2025.08.01PresentationPaper is accepted at ICCV 2025 Workshop

- 2025.04.12JournalOur paper about fishing ground edtimation was published in PLOS ONE

- 2025.03.21PresentationPaper is accepted at IGARSS 2025 in Brisbane

- 2024.03.16PresentationTwo abstructs are accepted at IGARSS 2024

- 2024.01.02JournalOur paper about learning-based bathymetry upsampling was published in IEEE Access

Research Themes

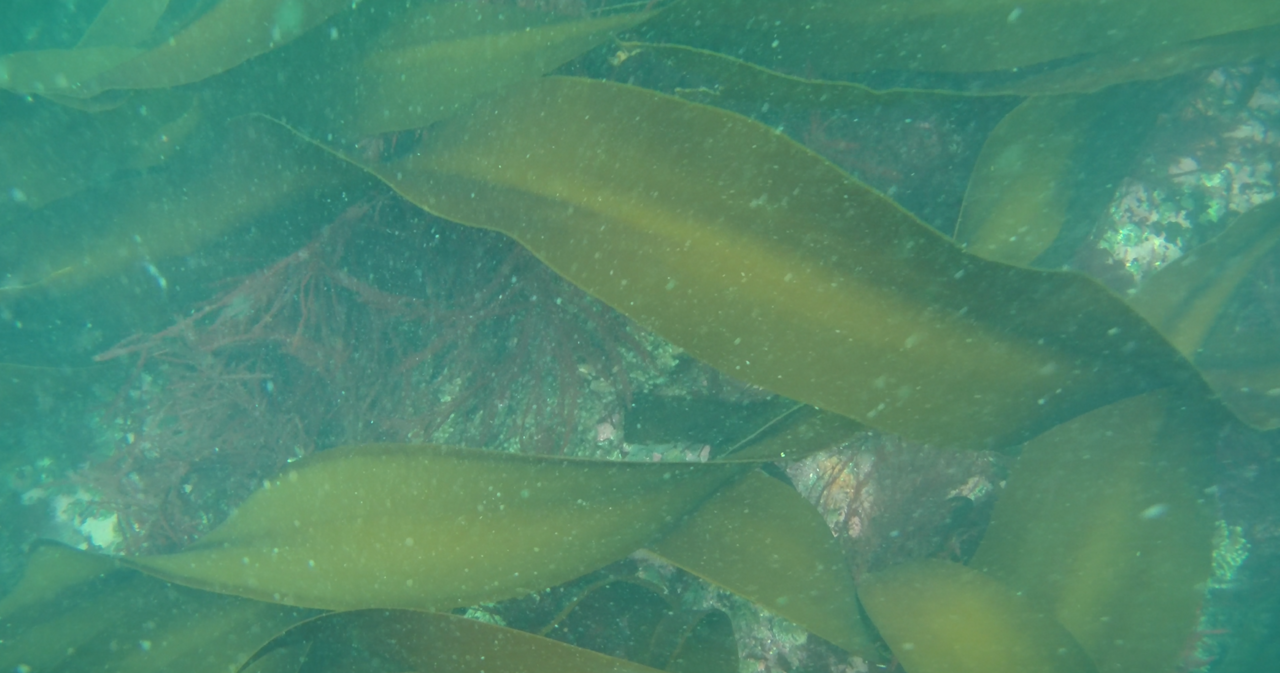

Computer Vision-based Ocean Environment Monitoring

We conduct research on automatically measuring marine environments, such as seagrass and seaweed beds, from aerial and underwater images using image recognition technologies.

Research Overview

Focusing on seagrass and seaweed beds along the Japanese coast, we aim to construct a “Digital Twin of Blue Carbon Ecosystems” by integrating observations of vegetation production and export, field surveys including water and sediment sampling from vessels, decomposition experiments, and ocean model simulations to quantify organic carbon dynamics derived from these ecosystems. Through this integrated approach, we seek to quantitatively assess carbon transfer from coastal vegetated habitats to offshore carbon reservoirs in Japan and to generate future projections under climate change scenarios, thereby contributing to climate change mitigation efforts.

Our research group develops image recognition technologies to automatically measure marine environments, such as seagrass and seaweed beds, from aerial and underwater imagery. These technologies will be utilized in the Digital Twin project to estimate ecosystem productivity and carbon dynamics.

Related Projects

- https://projectdb.jst.go.jp/grant/JST-PROJECT-23826975/

- Objects measurements for degraded underwater scenes https://kaken.nii.ac.jp/en/grant/KAKENHI-PROJECT-18H03263/

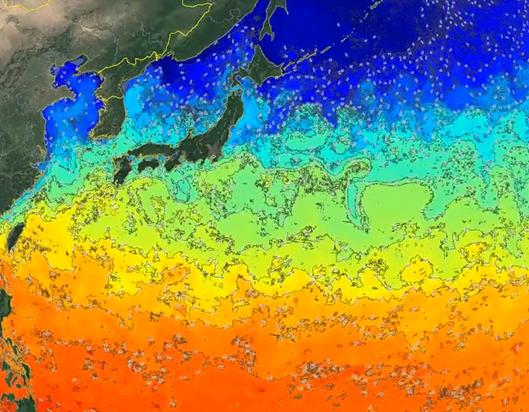

Deep Learning-based Ocean Weather Forecasting and Its Applications

We conduct research on ocean–atmospheric forecasting and its applications using deep learning.

Research Overview

The goal of this research is to develop a new class of technologies centered on an Earth System Foundation Model—a deep learning–based generative model trained using physical simulation outputs and data assimilation results as supervisory signals—along with downstream tasks built upon this foundation model.

To achieve this goal, we construct a foundation model that reflects the unique characteristics of meteorological data. Based on this model, we develop weather forecasting methods and learning-based data assimilation techniques. Furthermore, to validate the performance of the foundation model, we develop explainable weather forecasting methods built upon the model.

Related Projects

- "Development of a Learning-Based Data Assimilation Method Using an Earth Environmental Foundation Model", https://kaken.nii.ac.jp/en/grant/KAKENHI-PROJECT-25K03183/

- "OceanVision: Ocean Weather Forecasting by Integrating Physical Models and Machine Learning", https://kaken.nii.ac.jp/en/grant/KAKENHI-PROJECT-21H04913/

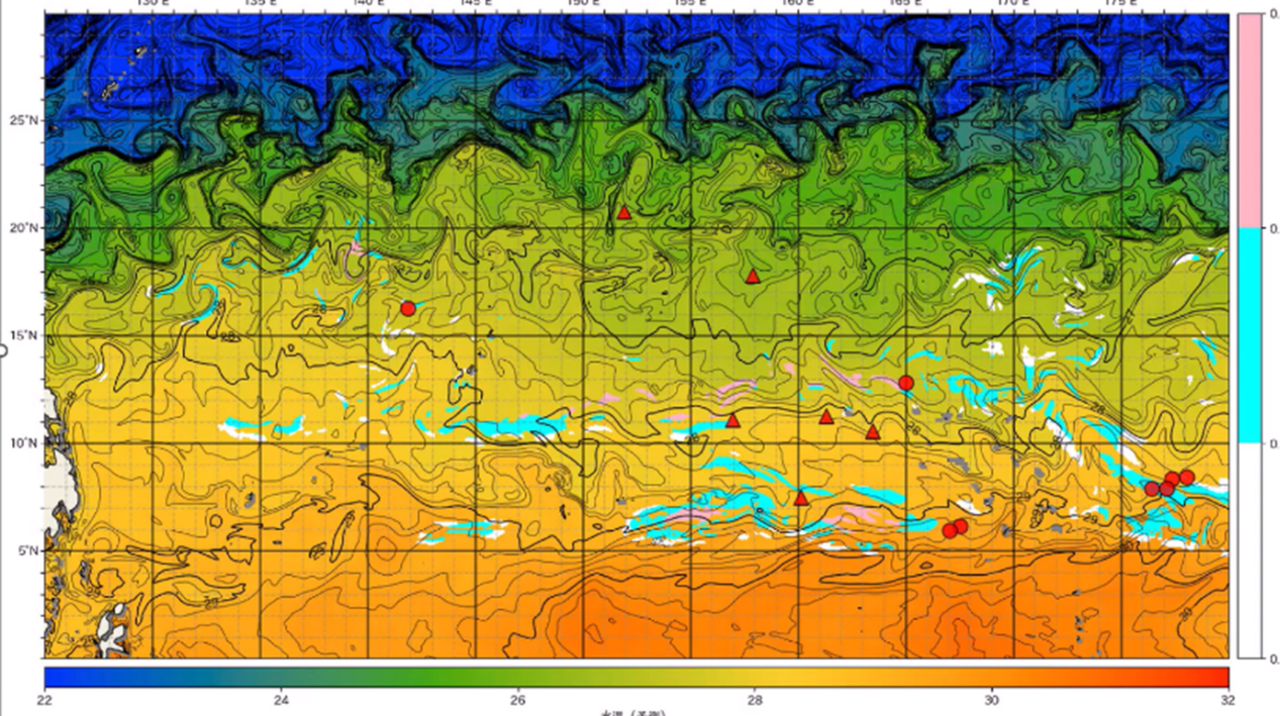

Pattern Recognition-based Potential Fishing Ground Prediction

We conduct research on AI-based technologies to estimate “good fishing grounds.”

Research Overview

Our goal is to establish Marine Fisheries AI Technology (FishTech)—an integrated framework combining fisheries and oceanographic domain knowledge, marine sensing technologies, and AI—and to create a sustainable fisheries model that balances economic efficiency with resource conservation.

Sensor data collected during fishing operations are analyzed and processed using novel technologies that incorporate domain knowledge of fish ecology and ocean physics into pattern recognition and data assimilation techniques. Through this approach, we generate operational support information and mid-term fisheries management strategies.

Through collaboration between ocean meteorology, fisheries science, and information science—particularly image-based pattern recognition technologies—we aim to explore new applications of pattern recognition in the marine and fisheries domains.

Related Projects

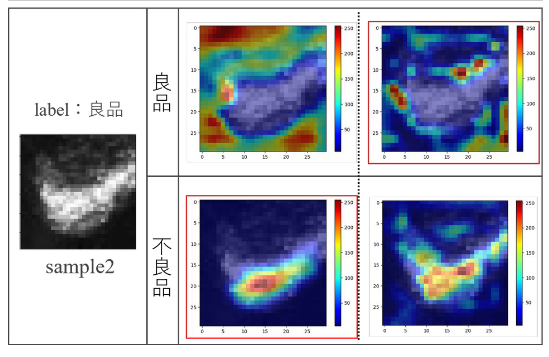

Industrial Applications of Image Recognition Technology

We conduct research on the application of image recognition technologies across various industrial fields, including manufacturing, construction, agriculture, retail, and broadcasting.

Research Overview

Our research focuses on applying image recognition technologies to a wide range of industries, such as manufacturing, construction, agriculture, retail, and broadcasting.

Many of these research themes are carried out as part of collaborative projects with industry partners.